Interesting indeed.

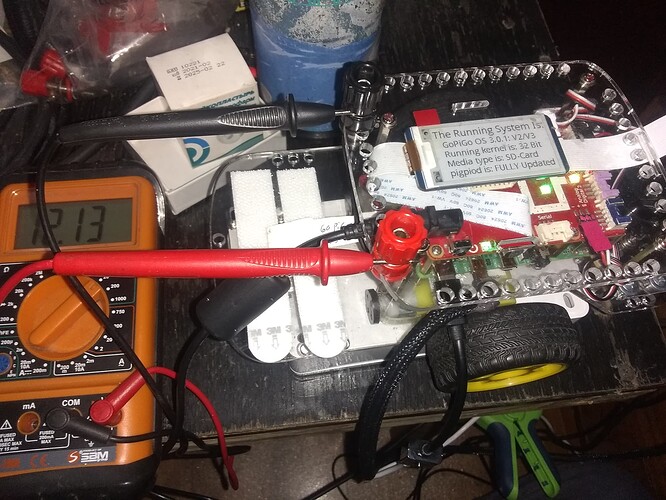

I don’t have a fixed voltage source, so I made some measurements based on an initial voltage reading on Carl.

(Carl also running voicecommander, juicer, wheel logger, health check, life logger)

I added an optional command line argument for the sleep_interval,

and removed the rounding of the measured voltage differential after testing showed {:.3f} printed identical means to the rounded means.

(I was worried about compounding rounding error into the means and std deviations.)

Additionally, I wanted to see how the min/max/mean/sdev varied so here are:

- two 10 second runs at 1.0 s

- two 10 second runs at 0.1 s

- two 60 second runs at 1.0 s

- two 60 second runs at 0.1 s

- two 120 second runs at 1.0 s

- two 120 second runs at 0.1 s

We took 10 measurements at 1.0 seconds (based on reference voltage of 10.057)

min: -0.128 max: 0.000 mean: -0.071 sdev: 0.043

We took 103 measurements at 0.1 seconds (based on reference voltage of 9.997)

min: -0.205 max: 0.000 mean: -0.160 sdev: 0.037

We took 105 measurements at 0.1 seconds (based on reference voltage of 10.091)

min: -0.103 max: 0.060 mean: -0.056 sdev: 0.026

We took 10 measurements at 1.0 seconds (based on reference voltage of 10.048)

min: -0.120 max: 0.000 mean: -0.075 sdev: 0.033

We took 60 measurements at 1.0 seconds (based on reference voltage of 10.022)

min: -0.129 max: 0.085 mean: -0.084 sdev: 0.038

We took 60 measurements at 1.0 seconds (based on reference voltage of 9.98)

min: -0.145 max: 0.043 mean: -0.108 sdev: 0.038

We took 605 measurements at 0.1 seconds (based on reference voltage of 9.98)

min: -0.120 max: 0.120 mean: -0.072 sdev: 0.033

We took 638 measurements at 0.1 seconds (based on reference voltage of 9.851)

min: -0.214 max: 0.017 mean: -0.158 sdev: 0.036

We took 1200 measurements at 0.1 seconds (based on reference voltage of 9.466)

min: -0.154 max: 0.146 mean: -0.091 sdev: 0.044

We took 121 measurements at 1.0 seconds (based on reference voltage of 9.466)

min: -0.128 max: 0.035 mean: -0.083 sdev: 0.037

We took 120 measurements at 1.0 seconds (based on reference voltage of 9.483)

min: -0.103 max: 0.086 mean: -0.056 sdev: 0.043

We took 1202 measurements at 0.1 seconds (based on reference voltage of 9.431)

min: -0.146 max: 0.154 mean: -0.096 sdev: 0.042

pi@Carl:~/Carl/Examples/voltagereadspeed $

I don’t think I can see a significant difference between 1.0s readings and 0.1s readings,

but I it might take a lot more tests to be confident of that.

Having a nice fixed voltage source would allows longer runs,

but perhaps the standard deviation is related to the varying processor load more than A2D variation.

I have no idea how to characterize the constantly changing processor load.

If I was going to try to hypothesize why 0.1 s readings might have greater accuracy over 1.0 second readings,

I would propose that the processor load changes less in 0.1 seconds than in 1.0 seconds,

but that is just wild free running brain storming.

How I changed the program:

#!/usr/bin/python3

# FILE: batt_test.py

# PURPOSE: Characterize GoPiGo3 battery voltage measurements

"""

USAGE: ./batt_test.py [-h] [-i INTERVAL]

optional arguments:

-h, --help show this help message and exit

-i INTERVAL, --interval INTERVAL (n.n in seconds, default=1.0)

"""

import sys

from easygopigo3 import EasyGoPiGo3

from time import sleep

import argparse

import statistics

argparser = argparse.ArgumentParser(description='batt_test.py Characterize GoPiGo3 battery voltage measurements')

argparser.add_argument('-i', '--interval', dest='interval', type=float, help="n.n in seconds, default=1.0",default=1.0)

args = argparser.parse_args()

sleep_interval_seconds = args.interval

mybot = EasyGoPiGo3()

# value = 0

values = []

count = 0

# Reference_Input_Voltage = 12.0

Reference_Input_Voltage = mybot.get_voltage_battery()

file1 = open("./voltage_test.txt", "a")

def round_up(x, decimal_precision=2):

# "x" is the value to be rounded using 4/5 rounding rules

# always rounding away from zero

#

# "decimal_precision is the number of decimal digits desired

# after the decimal divider mark.

#

# It returns the **LESSER** of:

# (a) The number of digits requested

# (b) The number of digits in the number if less

# than the number of decimal digits requested

# Example: (Assume decimal_precision = 3)

# round_up(1.123456, 3) will return 1.123. (4 < 5)

# round_up(9.876543, 3) will return 9.877. (5 >= 5)

# round_up(9.87, 3) will return 9.87

# because there are only two decimal digits and we asked for 3

#

if decimal_precision < 0:

decimal_precision = 0

exp = 10 ** decimal_precision

x = exp * x

if x > 0:

val = (int(x + 0.5) / exp)

elif x < 0:

val = (int(x - 0.5) / exp)

else:

val = 0

if decimal_precision <= 0:

return (int(val))

else:

return (val)

try:

print("batt_test with read interval {:.2f} seconds".format(sleep_interval_seconds))

while True:

# Measured_Battery_Voltage = round_up(mybot.get_voltage_battery(), 3)

Measured_Battery_Voltage = mybot.get_voltage_battery()

Five_v_System_Voltage = round_up(mybot.get_voltage_5v(), 3)

# Measured_voltage_differential = round_up((Reference_Input_Voltage - Measured_Battery_Voltage),3)

Measured_voltage_differential = Reference_Input_Voltage - Measured_Battery_Voltage

# value = value + Measured_voltage_differential

values.append(Measured_voltage_differential)

count = count+1

print("Measured Battery Voltage =", Measured_Battery_Voltage)

print("Measured voltage differential = ", Measured_voltage_differential)

print("5v system voltage =", Five_v_System_Voltage, "\n")

print("Total number of measurements so far is ", count)

sleep(sleep_interval_seconds)

except KeyboardInterrupt:

print("\nThat's All Folks!\n")

data="\nWe took " + str(count) + " measurements at {:.1f}".format(sleep_interval_seconds) + " seconds (based on reference voltage of " + str(Reference_Input_Voltage) + ")"

print(data)

file1.write(data)

file1.write("\n")

data="min: {:.3f} max: {:.3f} mean: {:.3f} sdev: {:.3f} \n".format(min(values), max(values), statistics.mean(values), statistics.pstdev(values))

print(data)

file1.write(data)

file1.close()

sys.exit(0)

At least it wasn’t a

At least it wasn’t a  .

.

)

)