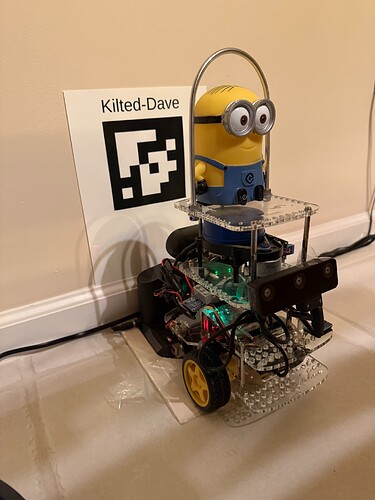

Couldn’t resist trying MoonDream vision language assistant on Dave and Wali.

Now WaLI sports an 8GB Pi5 and Dave only has a 4GB Pi4, but why not.

Here is the pic:

So first we ask Dave “What do you see?” (using the MoonDream 0.5b model 693Mb)

(moondream_006_venv) ubuntu@kilteddave:~/KiltedDave/systests/moondream/examples_with_API_006$ ./see_wali_and_dave.py

Using moondream-0.5b model with moondream 0.0.6 Python API

Model Load Time: 19.13 seconds

Image Load and Encode Time: 36.69 seconds

Query Time: 55.68 seconds

What do you see?

The image features a man sitting next to a table with a collection of Lego toys.

There are various Lego models and a small camera on the table,

as well as a sign that reads “TBS-WORLD.”

The man appears to be posing for a photo with the Lego items,

showcasing the fun and creative aspects of the Lego hobby.

ubuntu@kilteddave:~/KiltedDave/ros2ws$ ./status.sh

********** Humble Dave 2 ROS2 GoPiGo3 Status ***********

Saturday 02/21/26

22:30:03 up 1:39, 3 users, load average: 4.07, 1.49, 0.74

temp=68.6'C

frequency(48)=1800404352

throttled=0x0

GoPiGo3 Battery Voltage: 12.3 volts

total used free shared buff/cache available

Mem: 3.7Gi 2.6Gi 115Mi 17Mi 1.1Gi 1.1Gi

Swap: 0B 0B 0B

So using the half billion token moondream model on a 4GB Raspberrry Pi 4:

- Used 100% of the Pi 4 (all four cores)

- Used 1.9GB memory

- Took 19 seconds to load the model

- Took 37 seconds to “encode” the image

- Took 56 seconds to answer “What do you see?”

Now turning to TurtleBot5-WaLI with the 2 billion token moondream model on an 8GB Raspberry Pi5:

- Used 100% of the Pi 5 (all four cores)

- Used 5GB memory

- Took 21 seconds to load the (2.04GB) model

- Took 29 seconds to “encode” the image

- Took 41 seconds to answer “What do you see?”

and what it said:

The image features a man sitting next to a robot, which is placed on a table.

The robot is positioned in the center of the table, while the man is situated on the left side.

The robot appears to be a small, possibly toy-like, model.

==============

Now let’s see what Dave can do if we give him the hint:

In this picture there is a man and two robots. Describe the robots.

Query Time: 37.45 seconds

The image shows a man and two robots.

One of the robots is a TARDIS, a type of robot that resembles the character from the television series Doctor Who.

The other robot is likely a TARDIS-themed robot, but it is not possible to confirm their exact relationship or association with the man in the image.

====